In my previous blog post on flat techniques, I demonstrated how you can use a flat representation for nested data, explored searching and structural manipulation of this kind of format, but did not perform any numerical calculations – that’s what I’m going to look at now. This is also the topic of a classic Quote Quad paper by ‘Boolean’ Bob Smith. If you’re interested in discovering more once you’ve finished reading this blog post, I urge you to read that paper.

With numeric data, it’s much harder to use an embedded delimiter for partitioning, as there’s unlikely to be a choice of delimiter that will never be part of our data. Therefore, I’ll use a separate Boolean vector indicating the start of each partition (I showed a format like this in the last post, but didn’t put it into practice). Here’s an example:

test ←3 1 4 1 5 9 2 7

starts←1 0 0 1 0 1 0 1

starts⊂test ⍝ the nested vector this represents

┌─────┬───┬───┬─┐

│3 1 4│1 5│9 2│7│

└─────┴───┴───┴─┘

Partitioned Sum

Let’s look at the basic plus reduction, +/. I want to use our partition vector to do the equivalent of +/¨ on the partitions.

+/¨starts⊂test ⍝ the goal

8 6 11 7

The trick do this with the partition vector is to use a +\ and sample the results at the ends of sub-vectors:

[

test

+\test

starts ⍝ start of each sub-vector

1⌽starts ⍝ end of each sub-vector

]

3 1 4 1 5 9 2 7

3 4 8 9 14 23 25 32

1 0 0 1 0 1 0 1

0 0 1 0 1 0 1 1

(1⌽starts)/+\test ⍝ sample +\test at the end of each sub-vector

8 14 25 32

The important thing to notice here is that 8 is the sum of the first sub-vector, 14 is the sum of the first and second sub-vectors, 25 is the sum of the first, second, and third sub-vectors, and so on. This is the same as +\+/¨starts⊂test:

+\+/¨starts⊂test

8 14 25 32

To recover the sum of each sub-vector, I can undo the +\ by finding the pairwise differences between the results:

¯2-/0,(1⌽starts)/+\test

8 6 11 7

It’s time to try it on some larger data! I’ll need some random numbers, and some random partition points.

numbers←?1E6⍴1000 ⍝ random (whole) numbers

starts←0.8<?1E6⍴0 ⍝ random partition points

(⊃starts)←1 ⍝ must start with a 1

nested←starts⊂numbers ⍝ to compare against

[10↑numbers ⋄ 10↑starts] ⍝ let's look at it

123 898 773 377 564 395 306 673 84 62

1 0 0 1 0 0 0 0 0 0

Now, do I see a speed improvement by using the flat version of +/¨?

]RunTime -c "+/¨nested" "¯2-/0,(1⌽starts)/+\numbers"

+/¨nested → 2.7E¯3 | 0% ⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕

¯2-/0,(1⌽starts)/+\numbers → 1.3E¯3 | -53% ⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕

Great, it’s around twice as fast. I didn’t include the cost of converting between formats here – including the starts⊂numbers in this comparison would tip the scales even further.

It doesn’t end here, though. I generated partitions with the expression 0.8<1E6⍴0, which includes a 1 approximately every 5 places. By changing that 0.8 constant, I can change the density of 1s in our partition vector, thereby controlling the size and count of sub-vectors that the data is chopped up into. When I increase it, there will be fewer, larger, sub-vectors; when I decrease it, there will be more, smaller, ones. Changing this constant has an effect on the relative performance of the flat method.

Using 0.5:

⍝ .. remake data ..

]RunTime -c "+/¨nested" "¯2-/0,(1⌽starts)/+\numbers"

+/¨nested → 6.3E¯3 | 0% ⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕

¯2-/0,(1⌽starts)/+\numbers → 1.8E¯3 | -72% ⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕

Using 0.9:

⍝ .. remake data ..

]RunTime -c "+/¨nested" "¯2-/0,(1⌽starts)/+\numbers"

+/¨nested → 1.7E¯3 | 0% ⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕

¯2-/0,(1⌽starts)/+\numbers → 1.1E¯3 | -32% ⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕

Using 0.99:

⍝ .. remake data ..

]RunTime -c "+/¨nested" "¯2-/0,(1⌽starts)/+\numbers"

+/¨nested → 2.1E¯4 | 0% ⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕

¯2-/0,(1⌽starts)/+\numbers → 5.8E¯4 | +184% ⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕

This reveals a lot. The greater the constant, the worse our flat version becomes in terms of performance. This makes sense – nested arrays bring with them some overheads (as discussed in my previous blog post on this topic), and the number and size of nested arrays will have an effect on these overheads. There’s clearly a turning point at which the extra effort we’re putting in for our flat version outweighs the benefits we get by avoiding nesting. Ultimately, it depends on the size of the partitions that you’re working with.

It should be emphasised that the best benchmark is your own application running on real data. You might want to use a flat partitioned +/¨, but you might find that your partitions are too large to give you any benefit. You should check that it gives you an improvement!

There’s another caveat to be aware of when using these flat techniques for numeric calculations: non-whole numbers can cause problems. For example, here’s the partitioned +/ on some non-integer data:

numbers+←?1E6⍴0 ⍝ add a fraction to each

nested←starts⊂numbers

(+/¨nested)≡(¯2-/0,(1⌽starts)/+\numbers)

0

What’s happening here? Is the method wrong? Well, no, but the result is different. The reason is that non-whole numbers are stored with a floating-point representation in the interpreter (64-bit binary floating-point, under the default ⎕FR←645). The issue with this is that addition on floating-point numbers is not associative, that is, (a+b)+c might be a tiny bit different from a+(b+c). We are relying on the associativity of addition to ‘undo the +\’, so some small differences are going to build up.

⎕CT allows a level of ‘fuzziness’ in equality comparisons, but not enough to cover all the differences in the example. It is important to be aware that, when you have non-whole numbers, the partitioned versions of numeric operations can accumulate some errors due to the behaviour of floating-point arithmetic. If you’re working in a context where you need exactly the same results as a regular +/¨, you might need to stick to the nested format.

Partitioned Any (Or-Reduction)

When I looked at counting words containing an 'a' in the last post, I teased you by mentioning some expressions that I would return to. Here are the two expressions that I promised to investigate further:

+/2</0,(1⌽V=';')/+\V='a'

281193

(V=';'){+/(⍺/⍵)≥a/1⌽a←⍺/⍨⍵∨⍺}V='a'

281193

You might have noticed that the first of these expressions is very similar to the partitioned sum from the previous section but with ¯2-/ replaced by 2</. This is essentially performing a ∨/ on each word, after comparing with 'a'. But how does this compare to the partitioned +/?

Some test data:

bools ←0 1 0 1 1 1 0 0 0 1 0 0 0

starts←1 0 1 0 0 1 0 0 1 1 1 0 0

I’m focusing on a Boolean ∨/ here, so just 1s and 0s. The starts vector chops up bools as follows:

starts⊂bools

┌───┬─────┬─────┬─┬─┬─────┐

│0 1│0 1 1│1 0 0│0│1│0 0 0│

└───┴─────┴─────┴─┴─┴─────┘

In this context, I can interpret +\bools as the number of 1s that have appeared in bools, up to and including each element:

[

bools

+\bools ⍝ number of 1s seen so far

1⌽starts ⍝ ends of each sub-vector

]

0 1 0 1 1 1 0 0 0 1 0 0 0

0 1 1 2 3 4 4 4 4 5 5 5 5

0 1 0 0 1 0 0 1 1 1 0 0 1

By sampling from the end of each sub-vector with (1⌽starts)/, I can obtain the number of 1s that appeared in or before each sub-vector:

(1⌽starts)/+\bools

1 3 4 4 5 5

This vector shows which sub-vectors included a 1, as these are the places where the cumulative count of 1s increases. I can find those places with a pairwise <:

2</0,(1⌽starts)/+\bools

1 1 1 0 1 0

∨/¨starts⊂bools ⍝ check against the nested version

1 1 1 0 1 0

The difference from the flat +/ (which used a pairwise subtraction) is due to the fact that there the magnitude of the difference between each sub-vector was important, but here it is only relevant that there is a difference at all.

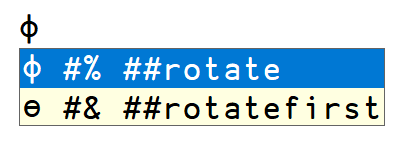

If this works so well, why did I show you two different expressions for a partitioned ∨/? The expression I just worked through uses an integer vector (+\bools) to determine its result. The other expression is trickier to understand, but does everything using just Boolean vectors (I’ll show the details later on). This means a boost in performance:

(starts bools)←0.9<1E6?⍤⍴¨0 0

(⊃starts)←1

nested←starts⊂bools

]RunTime -c "2</0,(1⌽starts)/+\bools" "starts{(⍺/⍵)≥a/1⌽a←⍺/⍨⍵∨⍺}bools"

2</0,(1⌽starts)/+\bools → 7.8E¯4 | 0% ⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕

starts{(⍺/⍵)≥a/1⌽a←⍺/⍨⍵∨⍺}bools → 2.3E¯4 | -71% ⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕⎕

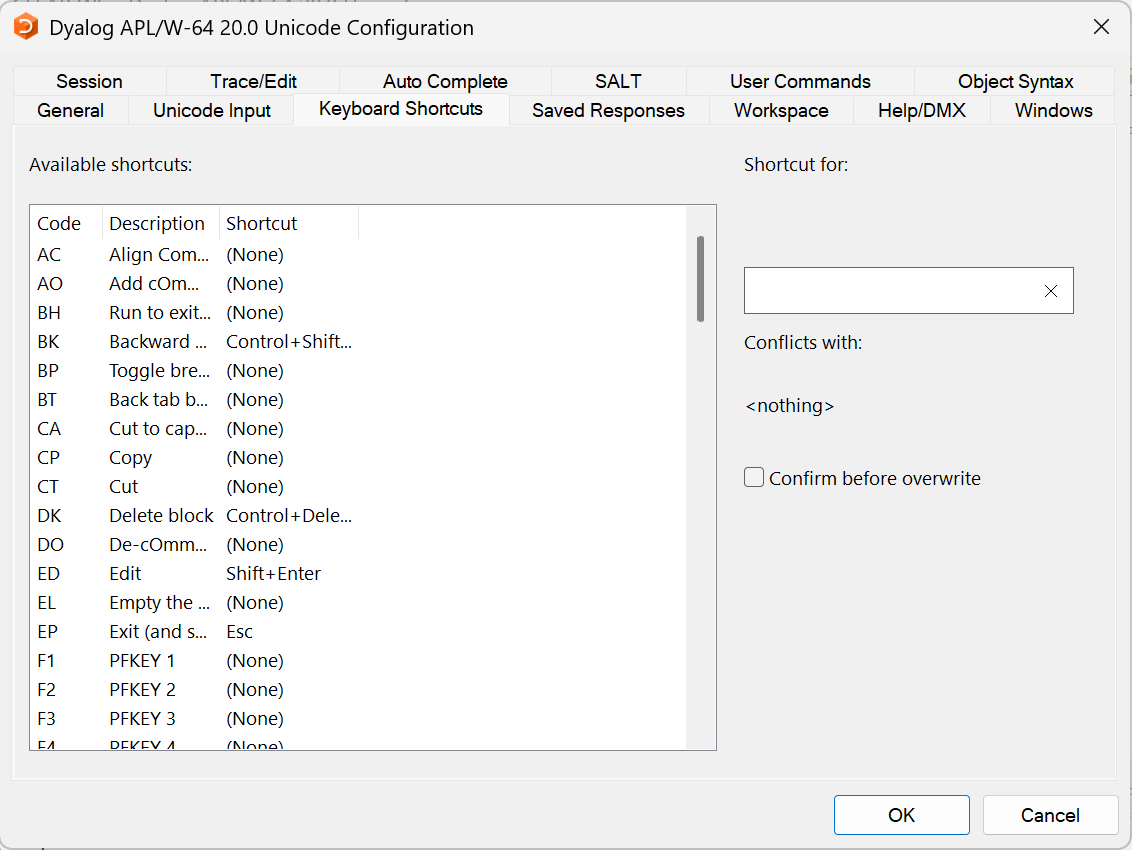

This is due to a trick that the Dyalog interpreter does with Boolean arrays. Since the only two values that need to be stored are 0 and 1, the interpreter only uses a single bit to store each value, compared to between 8 and 64 bits for arbitrary numbers or characters. This saves on space, since 8 or more values can be stored in the same space as a number takes up. It also saves on time in many cases, as your CPU has dedicated instructions for Boolean operations on bits and because less data needs to be moved into and out of the CPU.

{(⍺/⍵)≥a/1⌽a←⍺/⍨⍵∨⍺} is not an easy function to understand. It’s easier to understand by breaking it down and understanding what the intermediate results really mean at each step. Some complications come from 1s in the data occupying the same index as 1s in the partition vector. So, for now, I’ll use some sample data that doesn’t include that case:

bools ←0 1 0 0 1 1 0 0 0 0 0 1

starts←1 0 0 1 0 0 1 0 0 1 0 0

starts⊂bools ⍝ nested vector represented by this partition vector

┌─────┬─────┬─────┬─────┐

│0 1 0│0 1 1│0 0 0│0 0 1│

└─────┴─────┴─────┴─────┘

Next, bools∨starts gives us a mask of the places that are either beginnings of a partition or a 1 in the data:

[bools ⋄ starts ⋄ bools∨starts]

0 1 0 0 1 1 0 0 0 0 0 1

1 0 0 1 0 0 1 0 0 1 0 0

1 1 0 1 1 1 1 0 0 1 0 1

Using this mask to compress starts returns something very interesting. In this new vector, a 1 corresponds to the start of a partition, and a 0 corresponds to a 1 inside a partition.

┌─────┬─────┬─────┬─────┐

starts⊂bools: │0 1 0│0 1 1│0 0 0│0 0 1│

└↑─↑──┴↑─↑─↑┴↑────┴↑───↑┘

└┐└┐ ┌┘┌┘┌┘┌┘┌────┘ │

starts/⍨bools∨starts: 1 0 1 0 0 1 1 0──────┘

With this sample data, where there are no 1s at the start of partitions, it now becomes fairly straightforward to see whether there’s a 1 in a partition – if a 1 in the new vector is followed by a 0, then there’s an internal 1, otherwise, if it’s followed by a 1, then there isn’t. I can easily extract the value following each 1 in this vector:

a←starts/⍨bools∨starts

a/1⌽a

0 0 1 0

A 0 here indicates a 1 after the first place in a partition, while a 1 indicates the absence of that, so I need to flip the bits:

~a/1⌽a

1 1 0 1

∨/¨starts⊂bools

1 1 0 1

If I put all this together in a function, I get {~a/1⌽a←⍺/⍨⍵∨⍺}. But I’ve not finished yet! This is different from the original function because I’m still not handling the case where a partition begins with a 1. I need to use different test data to investigate this case:

bools ←0 0 0 1 1 0 1 0 0 0 0 1

starts←1 0 0 1 0 0 1 0 0 1 0 0

starts⊂bools

┌─────┬─────┬─────┬─────┐

│0 0 0│1 1 0│1 0 0│0 0 1│

└─────┴─────┴─────┴─────┘

∨/¨starts⊂bools ⍝ what I want

0 1 1 1

~a/1⌽a←starts/⍨bools∨starts ⍝ what I get – a false negative!

0 1 0 1

In addition to 1s that are already in the result (which indicate a 1 appearing in a partition excluding the first place), I want to include a 1 when there is a 1 in the first place in a partition. I can see what each partition begins with by compressing the data vector with the partition vector:

starts/bools

0 1 1 0

Including these 1s in the result:

r←~a/1⌽a←starts/⍨bools∨starts

(starts/bools)∨r

0 1 1 1

Fantastic! Now the method works properly. There’s one minor tweak I can make, exploiting the fact that ∨~ is equivalent to ≥ on Boolean data:

(starts/bools)∨~a/1⌽a←starts/⍨bools∨starts

0 1 1 1

(starts/bools)≥ a/1⌽a←starts/⍨bools∨starts

0 1 1 1

And with that, I have all the pieces to construct the original function: {(⍺/⍵)≥a/1⌽a←⍺/⍨⍵∨⍺}.

Where to go for more

I’ve only taken a few small steps into the techniques for working with partitioned arrays. I’ve explored a few reductions, but what about the rest? What about scans? To learn more, I strongly encourage you to read Bob Smith’s paper on the topic, and to go to APLcart for a list of partitioned reductions and scans written with modern APL features.

Follow

Follow